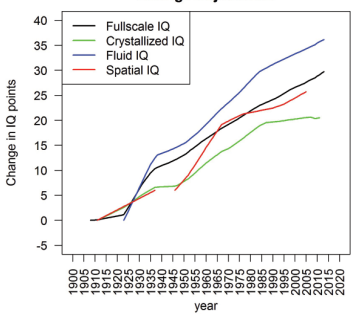

Changes in mean IQ between

1909 and 2013 (Pietschnig and Voracek 2015, p. 285)

Because of the Flynn effect,

average IQ has risen by 35 points over the past century. That’s more than the

difference between the threshold of mental retardation and the current average.

Does that seem plausible?

In a 1984 paper, James Flynn

showed that the mean IQ of White Americans rose by 13.8 points between 1932 and

1978 (Flynn 1984). When that increase, now called the Flynn effect, was charted

between 1920 and 2013, the gain in IQ was found to be no less than 35 points (Pietschnig

and Voracek 2015).

The IQ gain did not happen at

a uniform rate. It can be broken down into five stages:

·

a small increase

between 1909 and 1919 (0.80 points/decade)

·

a surge during the

1920s and early 1930s (7.2 points/decade)

·

a slower pace of

growth between 1935 and 1947 (2.1 points/decade)

·

a faster one

between 1948 and 1976 (3.0 points/decade)

·

a slower pace thereafter

(2.3 points/decade)

The Flynn effect began in the

core of the Western world and is now ending there. In fact, it has ended

altogether in Norway and Sweden and has begun to reverse itself in Denmark and

Finland (Pietschnig and Voracek 2015, pp. 283, 288-289).

Was it a real increase?

Average IQ has thus risen by

35 points over the past century. That’s more than the difference between the threshold

of mental retardation and the current average. Does that seem plausible?

My mother went to high school

during the 1930s, and I went during the 1970s. So my generation should be 13.8

points smarter than hers. That’s a big difference, and it should have been

obvious to someone like myself who knew people from both generations.

It wasn’t obvious. My mother

had a small library of books that she often consulted, mostly religious

literature and works like Welcome

Wilderness and Little Dorrit. Not

all of her generation were obsessive readers, but many were. And the books they

read weren’t light reading. Fiction typically had complex plots with subplots

running alongside each other, and religious books were a maze of Biblical references

that would seem obscure unless you knew the Bible, usually the King James

Version. If you could handle that, you could handle string theory.

The Flynn effect also implies

that post-millennials are 10 points smarter than my generation. Again, that’s

not my impression. Books and movies now have simpler plots and use a smaller

vocabulary—a key component of verbal intelligence. According to the General

Social Survey, vocabulary test scores fell by 7.2% between the mid-1970s and

the 2010s among non-Hispanic White Americans. The decline affected all levels

of educational attainment, so it wasn’t just a matter of dumber people now going

to college (Frost 2019; Twenge et al. 2019). The same period also saw an

increase in reaction time: since the 1970s, successive birth cohorts have

required more time, on average, to process the same information (Madison 2014;

Madison et al. 2016).

Finally, there is the genetic

evidence, specifically alleles associated with high educational attainment. In

Iceland, those alleles have become steadily fewer in cohorts born since 1910

(Kong et al. 2017). The same trend has been observed between the 1931 and 1953

birth cohorts of European Americans (Beauchamp 2016). According to the

Icelandic study, the downward trend is happening partly because more

intelligent Icelanders are staying in school longer and postponing reproduction.

But it is also happening among those who do not pursue higher education. Modern

culture seems to be telling people that children are costly and bothersome, and

that message is most convincing to people who like to plan ahead.

Some writers have argued that

the genetic decline in intellectual potential has been more than offset by improvements

to our learning environment, particularly better and longer education. This

improved environment is helping us do more with our intellectual potential. But

is there real-world evidence that we are, on average, becoming smarter? Robert

Howard (1999, 2001, 2005) cites four lines of evidence:

· The prevalence of

mild mental retardation has fallen in the US population and elsewhere.

· Chess players are

reaching top performance at earlier ages.

· More journal

articles and patents are coming out each year.

· According to high

school teachers who have taught for over 20 years, “most reported perceiving that

average general intelligence, ability to do school work, and literacy skills of

school children had not risen since 1979 but most believed that children's

practical ability had increased” (Howard 2001).

The above evidence is debatable,

as Howard himself acknowledges. Fewer children are being diagnosed as mental

retarded because that term has become stigmatized. Prenatal screening has also

had an impact. As for chess, it’s a niche activity that tells us little about

the general population. More journal articles are indeed being published each

year, but the reason has more to do with pressure to “publish or perish.”

Finally, teachers are not objective observers: they are part of a system that

rewards certain views and penalizes others. And if they reject that system,

they probably won’t stick around for more than twenty years.

A last word

I suspect we’re getting better

at some cognitive tasks, particularly the ones we learn at school—if only

because we’re spending more of our lifetime in the classroom. One of those

tasks is sitting down at a desk and taking a test. We’re better not only at that

specific task but also at the broader one of thinking in terms of questions and

answers. Previously, we just learned the rules and imitated those who knew

better than us.

Test-taking certainly made an

impression on my mental development. Long after my undergrad studies I would

have nightmares of sitting alone in an immense exam hall and not knowing the

answer to an insoluble question.

References

Beauchamp, J.P. (2016).

Genetic evidence for natural selection in humans in the contemporary United

States. Proceedings of the National

Academy of Sciences. 113(28): 7774-7779. https://doi.org/10.1073/pnas.1600398113

Flynn, J.R. (1984). The mean

IQ of Americans: Massive gains 1932–1978. Psychological Bulletin 95(1):29–51. https://psycnet.apa.org/doi/10.1037/0033-2909.95.1.29

Frost, P. (2019). Why is

vocabulary shrinking? Evo and Proud,

September 11. https://evoandproud.blogspot.com/2019/09/why-is-vocabulary-shrinking.html

Frost, P. (2020). From here

it’s all downhill. Evo and Proud,

March 16. https://evoandproud.blogspot.com/2020/03/from-here-its-all-downhill.html

Howard, R. W. (1999).

Preliminary real-world evidence that average human intelligence really is

rising. Intelligence 27: 235–250. https://doi.org/10.1016/S0160-2896(99)00018-5

Howard, R. W. (2001).

Searching the real world for signs of rising population intelligence. Personality and Individual Differences

30: 1039–1058. https://doi.org/10.1016/S0191-8869(00)00095-7

Howard, R. W. (2005).

Objective evidence of rising population ability: A detailed examination of

longitudinal chess data. Personality and Individual Differences, 38(2),

347–363. https://doi.org/10.1016/j.paid.2004.04.013

Kong, A., M.L. Frigge, G.

Thorleifsson, H. Stefansson, A.I. Young, F. Zink, G.A. Jonsdottir, A. Okbay, P.

Sulem, G. Masson, D.F. Gudbjartsson, A. Helgason, G. Bjornsdottir, U.

Thorsteinsdottir, and K. Stefansson. (2017). Selection against variants in the

genome associated with educational attainment. Proceedings of the National Academy of Sciences 114(5):

E727-E732. https://doi.org/10.1073/pnas.1612113114

Madison, G. (2014). Increasing

simple reaction times demonstrate decreasing genetic intelligence in Scotland

and Sweden, London Conference on Intelligence. Psychological comments, April 25 #LCI14 Conference proceedings. http://www.unz.com/jthompson/lci14-questions-on-intelligence/

Madison, G., M.A. Woodley of

Menie, and J. Sänger. (2016). Secular Slowing of Auditory Simple Reaction Time

in Sweden (1959-1985). Frontiers in

Human Neuroscience, August 18. https://doi.org/10.3389/fnhum.2016.00407

Pietschnig, J., and M.

Voracek. (2015). One Century of Global IQ Gains: A Formal Meta-Analysis of the

Flynn Effect (1909-2013). Perspectives on

Psychological Science 10(3): 282-306. https://doi.org/10.1177/1745691615577701

Twenge, J.M., W.K. Campbell,

and R.A. Sherman. (2019). Declines in vocabulary among American adults within

levels of educational attainment, 1974-2016. Intelligence 76: 101377. https://doi.org/10.1016/j.intell.2019.101377